Trust, but Observe: The 'human-in-the-loop' guardrails you need before letting your systems self-heal

- Apr 8

- 5 min read

It is April 2026, and the landscape of Information Technology has undergone a radical transformation. We have moved past the era of simple alerts and reactive monitoring. Today, Agentic AI and autonomous workflows are no longer experimental; they are the backbone of high-performing engineering teams. But as we hand over the keys to our digital infrastructure to self-healing systems, a critical question emerges: Who is watching the watchers?

At Visibility Platforms, we have seen a massive surge in organizations rushing to implement autonomous remediation. The promise is intoxicating: systems that identify a memory leak, spin up fresh containers, and redirect traffic before a single user notices a lag. However, the transition from manual intervention to full autonomy is fraught with risk. Without the right guardrails, an autonomous system can quickly become an "unforgiving" engine of destruction, compounding errors at machine speed.

In our view, the future of operational excellence isn't about removing humans from the equation; it’s about redefining their role. We believe in a "Trust, but Observe" philosophy. You must trust your agents to act, but you must observe every decision, every context, and every outcome with clinical precision.

The Dawn of the Agentic Era

The shift toward autonomous systems has been accelerated by the rise of the Agentic Strategy. We are no longer talking about simple "if-this-then-that" scripts. Modern AI agents can perform complex root cause analysis (RCA), query multiple data sources, and execute multi-step fixes across distributed environments.

This is a game-changer for Platform Engineering teams struggling with the sheer scale of cloud-native architectures. But as these agents become more sophisticated, the "black box" problem intensifies. If an agent restarts a production database at 3:00 AM, do you know why it did it? Did it have the deterministic context required to make that call, or was it hallucinating a correlation?

Why "Set and Forget" is a Dangerous Myth

There is a common misconception that self-healing means "no-ops." We anticipate that those who buy into this myth will face the most significant outages of the decade. The reality is that autonomous systems require more governance, not less.

The risk is not just a technical failure; it is a trust failure. When a system acts on its own and fails, the recovery time is often longer because the human engineers have lost the "mental map" of the current state. This is why we advocate for the Human-in-the-Loop (HITL) model. This isn't about slowing things down; it’s about providing the safety net that allows you to move faster with confidence.

The Problem of Contextual Blindness

AI agents are brilliant at pattern matching, but they often lack the "story" behind the data. As we’ve discussed before, observability is storytelling. Without the narrative of business impact, seasonal trends, or recent deployments, an agent might "fix" a spike in traffic that was actually a planned marketing campaign, effectively killing your revenue in the name of system stability.

The Essential Guardrails for 2026

To automate safely, organizations must implement specific, non-negotiable guardrails. At Visibility Platforms, we prioritise these four pillars when helping our clients reach the next level of operational maturity:

1. Approval Gates for High-Impact Actions

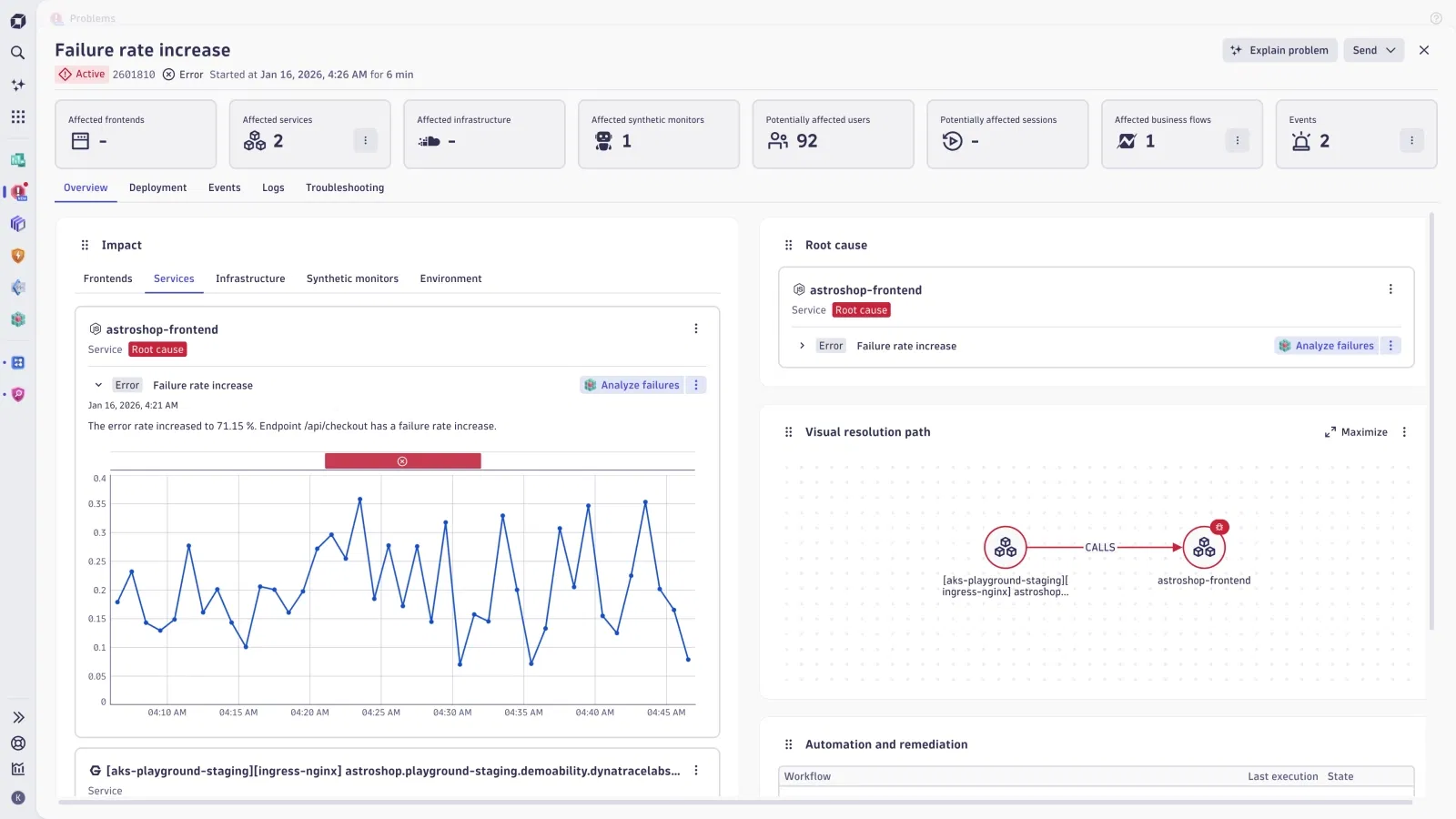

Not all fixes are created equal. While clearing a cache or restarting a non-critical microservice can be fully automated, actions like resource scaling, database schema changes, or traffic rerouting should require a "Human-in-the-loop" approval.

Modern platforms now allow for exception triage workflows. The agent does the legwork: identifies the issue, gathers the logs, and proposes the fix: and the human simply hits "Approve" via a Slack or Teams integration. This maintains the momentum of automation while ensuring a human is accountable for major shifts.

2. Deterministic Context and Logic

We must move away from "black box" AI. Every action taken by an autonomous system must be grounded in deterministic data. This means the AI isn't just "guessing" based on a probability; it is following a logic path defined by real-time telemetry.

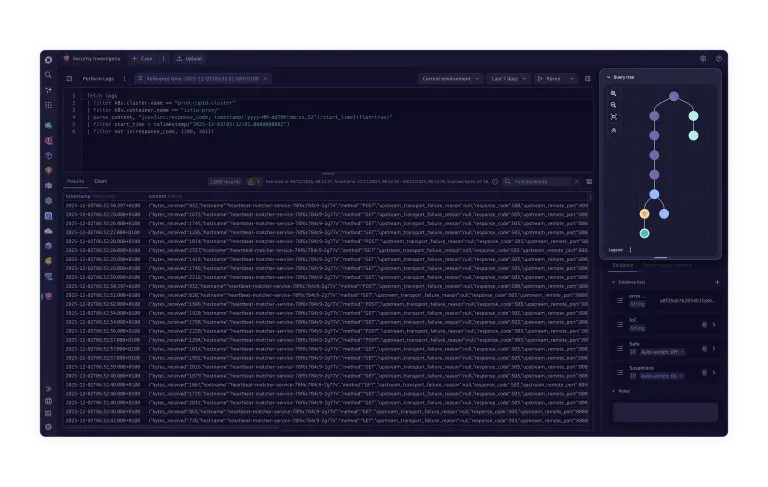

Using tools like Apica or Dynatrace, we can provide agents with a service dependency map that shows exactly how a failure in one area impacts another. This prevents the "flapping" effect where an AI tries to fix a symptom rather than the cause.

3. Risk-Triggered Escalation

A self-healing system must know its own limits. If an agent attempts a fix and the metrics do not improve within a predefined "wait-and-see" window, it must immediately stop and escalate to a human. This prevents the system from entering an infinite loop of "corrections" that could lead to a total site outage.

4. The "Black Box" Action Recorder

Just as aeroplanes have flight data recorders, your AI agents need an audit trail. Every query the agent runs, every log it reads, and every command it executes must be recorded in your observability platform. This allows for post-incident reviews of the AI’s behavior, turning every autonomous action into a learning opportunity for the team.

Building the Unified Data Plane

The biggest hurdle to safe automation is siloed data. If your AI agent only sees logs, but your human engineers are looking at traces and metrics in a different tool, you have a recipe for disaster.

The "Single Pane of Glass" may be a 2010 myth, but the Unified Data Plane is the 2026 reality. We believe that to truly master self-healing, you must unify your workflows. Whether you are using Hortium for edge visibility or specialized tools for Kubernetes, the data must flow into a central nervous system.

Maturity Over Magic: Our Strategic Approach

At Visibility Platforms, we don't just sell tools; we provide the 7 secrets to observability that lead to tangible business value. We understand that the jump to self-healing is a journey of maturity.

Our framework for a staged rollout includes:

Autonomously Alerting: The system detects issues with high accuracy and provides a pre-packaged investigation for humans.

Autonomously Decisioning: The system proposes the correct course of action, awaiting a human "thumbs up."

Interestingly, we’ve found that the most successful companies are the ones that are unafraid to start small. They automate the "toil": the boring, repetitive tasks that cause SRE burnout: while keeping their best minds focused on the complex architectural challenges that AI isn't yet ready to solve.

The Future is Collaborative

Can we do more with less? In the current economic climate, the pressure to automate is immense. But let this be a stark reminder: speed without control is just a faster way to fail.

As we look toward the remainder of 2026, the teams that "win" will be those that treat their AI agents as collaborators, not replacements. By building robust "human-in-the-loop" guardrails, you aren't just protecting your systems; you are empowering your people to lead the pack in innovation rather than just keeping the lights on.

Are you ready to evolve your digital performance? Whether you are navigating a complex data migration or looking to implement advanced agentic workflows, our team is here to ensure you do it safely.

Visibility Platforms: Optimising the tools you have, strategic guidance for the ones you need.

Want to see how we’ve helped industry leaders achieve operational maturity? Explore our work or reach out to us for a consultation today.

Comments